Aidge learning API#

Introduction#

Aidge provides its own native learning module. The primary purpose of Aidge Learning is to support Aidge’s IR for automated model optimization, notably advanced QAT, across heterogeneous deployment targets:

Ability to apply automated quantization schemes that are easily verifiable and customizable by users;

Formalized and fully reproducible support for industry-standard models and their derivatives;

Focus on custom hardware development, necessitating specific training strategies.

Basic example of training pipeline in Aidge (for a single epoch):

# Define the model (or load an existing ONNX one)

model = ...

model.set_backend("cuda")

# Initialize parameters (weights and biases)

aidge_core.init_producer(model, "Producer-1>(Conv2D|FC)", aidge_core.he_filler)

aidge_core.init_producer(model, "Producer-2>(Conv2D|FC)", lambda x: aidge_core.constant_filler(x, 0.01))

# Define the data provider (using either aidge_backend_opencv or torch DataLoader)

dataprovider = ...

# Define the optimizer and set the parameters to train

opt = aidge_learning.SGD(momentum=0.9)

opt.set_parameters(aidge_core.producers(model))

# Define the learning rate scheduler

learning_rates = aidge_learning.constant_lr(0.01)

opt.set_learning_rate_scheduler(learning_rates)

scheduler = aidge_core.SequentialScheduler(model)

for i, (input, label) in enumerate(tqdm(dataprovider)):

# Forward pass

pred = scheduler.forward(data=[input])[0]

# Reset the gradient

opt.reset_grad()

# Compute loss

loss = aidge_learning.loss.CELoss(pred, label)

# Compute accuracy (optional)

acc = aidge_learning.metrics.Accuracy(pred, label, 1)[0]

# Backward pass

scheduler.backward()

# Optimize the parameters

opt.update()

About gradient handling#

There is no “autograd” mechanism in Aidge, because the computational graph is explicitly defined, and the gradient computation path is determined from the outset.

Gradients are accumulated by default in the backward implementations of the operators.

Gradients are automatically reset just before each forward pass (it is the first forward hook registered by default for all nodes), except if the node has an attribute grad.no_reset.

When producer nodes are added to the optimizer parameters list with aidge_learning.Optimizer.set_parameters, the attribute grad.no_reset is automatically set for these nodes.

As a result, their gradients are not reset automatically.

For such nodes, a call to aidge_learning.Optimizer.reset_grad is required to clear the gradients.

This allows gradients to be accumulated across multiple forward-backward iterations before being reset.

When performing multiple iterations, make sure to scale the loss accordingly using the scaling argument, as shown in the example below:

# Number of iterations before each optimizer update step

nb_iters = 8

opt.reset_grad()

for i, (input, label) in enumerate(tqdm(dataprovider)):

pred = scheduler.forward(False, data=[input])[0]

loss = aidge_learning.loss.CELoss(pred, label, 1 / nb_iters)

scheduler.backward()

if (i + 1) % nb_iters == 0 or (i + 1) == len(dataprovider):

opt.update()

opt.reset_grad()

pbar.set_postfix(loss=f"{loss[0]:.4f}")

Components#

Fillers#

The aidge_core.init_producer method can be used to match in the graph the Producer to be filled with a filler:

Usage example:

# Initialize the weights

aidge_core.init_producer(model, "Producer-1>(Conv2D|FC)", aidge_core.he_filler)

# Initialize the bias

aidge_core.init_producer(model, "Producer-2>(Conv2D|FC)", lambda x: aidge_core.constant_filler(x, 0.01))

Note

Fillers are part of aidge_core and not aidge_learning.

The available fillers are:

- aidge_core.constant_filler(tensor: aidge_core.aidge_core.Tensor, value: object) None#

- aidge_core.normal_filler(tensor: aidge_core.aidge_core.Tensor, mean: object = 0.0, stdDev: object = 1.0) None#

- aidge_core.uniform_filler(tensor: aidge_core.aidge_core.Tensor, min: object, max: object) None#

- aidge_core.xavier_uniform_filler(tensor: aidge_core.aidge_core.Tensor, scaling: object = 1.0, varianceNorm: aidge_core.aidge_core.VarianceNorm = <VarianceNorm.FanIn: 0>) None#

- aidge_core.xavier_normal_filler(tensor: aidge_core.aidge_core.Tensor, scaling: object = 1.0, varianceNorm: aidge_core.aidge_core.VarianceNorm = <VarianceNorm.FanIn: 0>) None#

- aidge_core.he_filler(tensor: aidge_core.aidge_core.Tensor, varianceNorm: aidge_core.aidge_core.VarianceNorm = <VarianceNorm.FanIn: 0>, meanNorm: object = 0.0, scaling: object = 1.0) None#

Losses#

- aidge_learning.loss.MSE(graph: aidge_core.aidge_core.Tensor, target: aidge_core.aidge_core.Tensor, scaling: SupportsFloat | SupportsIndex = 1.0) aidge_core.aidge_core.Tensor#

- aidge_learning.loss.BCE(graph: aidge_core.aidge_core.Tensor, target: aidge_core.aidge_core.Tensor, scaling: SupportsFloat | SupportsIndex = 1.0) aidge_core.aidge_core.Tensor#

- aidge_learning.loss.CELoss(graph: aidge_core.aidge_core.Tensor, target: aidge_core.aidge_core.Tensor, scaling: SupportsFloat | SupportsIndex = 1.0) aidge_core.aidge_core.Tensor#

- aidge_learning.loss.KD(student_prediction: aidge_core.aidge_core.Tensor, teacher_prediction: aidge_core.aidge_core.Tensor, temperature: SupportsFloat | SupportsIndex = 2.0) aidge_core.aidge_core.Tensor#

- aidge_learning.loss.multiStepCELoss(graph: aidge_core.aidge_core.Tensor, target: aidge_core.aidge_core.Tensor, nbTimeSteps: SupportsInt | SupportsIndex, scaling: SupportsFloat | SupportsIndex = 1.0) aidge_core.aidge_core.Tensor#

Optimizers#

The base optimizer class is :class:` aidge_learning.Optimizer`:

- class aidge_learning.Optimizer#

- __init__(self: aidge_learning.aidge_learning.Optimizer) None#

- learning_rate(self: aidge_learning.aidge_learning.Optimizer) float#

- learning_rate_scheduler(self: aidge_learning.aidge_learning.Optimizer) Aidge::LRScheduler#

- parameters(self: aidge_learning.aidge_learning.Optimizer) list[aidge_core.aidge_core.Node]#

- reset_grad(self: aidge_learning.aidge_learning.Optimizer) None#

- set_learning_rate_scheduler(self: aidge_learning.aidge_learning.Optimizer, arg0: Aidge::LRScheduler) None#

- set_parameters(self: aidge_learning.aidge_learning.Optimizer, arg0: collections.abc.Sequence[aidge_core.aidge_core.Node]) None#

- update(self: aidge_learning.aidge_learning.Optimizer) None#

The available optimizers are:

- class aidge_learning.Adam#

- __init__(self: aidge_learning.aidge_learning.Adam, beta1: SupportsFloat | SupportsIndex = 0.8999999761581421, beta2: SupportsFloat | SupportsIndex = 0.9990000128746033, epsilon: SupportsFloat | SupportsIndex = 9.99999993922529e-09) None#

- class aidge_learning.SGD#

- __init__(self: aidge_learning.aidge_learning.SGD, momentum: SupportsFloat | SupportsIndex = 0.0, dampening: SupportsFloat | SupportsIndex = 0.0, weight_decay: SupportsFloat | SupportsIndex = 0.0) None#

Learning rate scheduling#

The base learning rate scheduling class is :class:` aidge_learning.LRScheduler`:

- class aidge_learning.LRScheduler#

- __init__(*args, **kwargs)#

- learning_rate(self: aidge_learning.aidge_learning.LRScheduler) float#

- lr_profile(self: aidge_learning.aidge_learning.LRScheduler, arg0: SupportsInt | SupportsIndex) list[float]#

- set_nb_warmup_steps(self: aidge_learning.aidge_learning.LRScheduler, arg0: SupportsInt | SupportsIndex) None#

- step(self: aidge_learning.aidge_learning.LRScheduler) int#

- update(self: aidge_learning.aidge_learning.LRScheduler) None#

The available learning rate schedulers are:

- aidge_learning.constant_lr(initial_lr: SupportsFloat | SupportsIndex) aidge_learning.aidge_learning.LRScheduler#

- aidge_learning.step_lr(initial_lr: SupportsFloat | SupportsIndex, step_size: SupportsInt | SupportsIndex, gamma: SupportsFloat | SupportsIndex = 0.10000000149011612) aidge_learning.aidge_learning.LRScheduler#

Example:

max_steps = len(train_loader) * nb_epochs

learning_rate = aidge_learning.step_lr(0.1, max_steps // 4, 0.1)

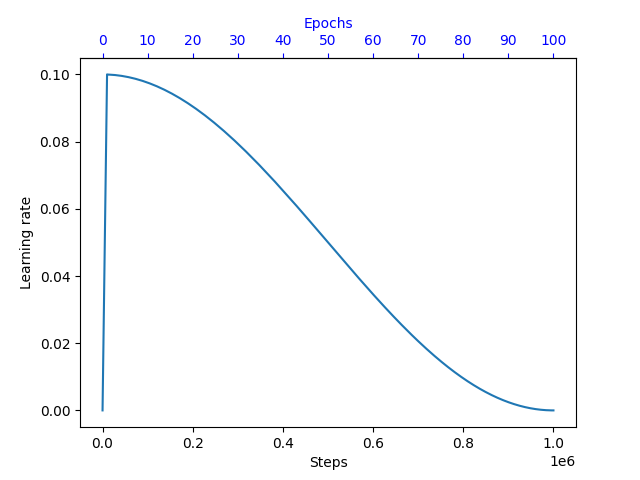

- aidge_learning.cosine_lr(initial_lr: SupportsFloat | SupportsIndex, max_steps: SupportsInt | SupportsIndex, min_decay: SupportsFloat | SupportsIndex = 0.0) aidge_learning.aidge_learning.LRScheduler#

Example:

max_steps = len(train_loader) * nb_epochs

learning_rate = aidge_learning.cosine_lr(0.1, max_steps, 0.0001)

learning_rate.set_nb_warmup_steps(len(train_loader))

Metrics#

- aidge_learning.metrics.Accuracy(prediction: aidge_core.aidge_core.Tensor, target: aidge_core.aidge_core.Tensor, axis: SupportsInt | SupportsIndex) aidge_core.aidge_core.Tensor#