Robust training#

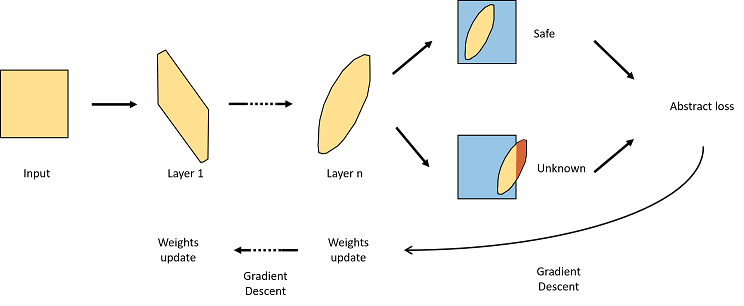

We aim to train a network to be locally robust around each point of its training dataset. For any perturbation under a range \(\epsilon\), the output of the network should be the same. This means same classification for a classifier, and can be extended for regression to a small distance between both output values. The value of \(\epsilon\) is usually link with the dataset and the intensity of the perturbation we allow on it.

The goal of the training is to learn that the \(\epsilon\)-neighbourhood has the same output. For this, we use intervals arithmetic: instead of using number to train the network we use interval and do all operations of the network on the interval.

We can then compute a robust loss on the output intervals and train the network on it.

Set up#

[ ]:

# install required modules

!pip install torchvision==0.14.1+cpu --extra-index-url https://download.pytorch.org/whl/cpu

!pip install numpy==1.24.1

!pip install ipywidgets widgetsnbextension

[ ]:

import aidge_core

import aidge_backend_cpu

import numpy as np

import aidge_learning

assert aidge_backend_cpu

import sys

import os

sys.path.append(os.path.abspath(os.path.join("../..")))

import tuto_utils

Dataset#

First we load the MNIST dataset and create a dataprovider for it.

[ ]:

import torchvision

from torchvision import transforms

from aidge_learning.confiance.training.dataset import AidgeDataset

# Load training dataset

transform = transforms.Compose([transforms.ToTensor()])

trainset = torchvision.datasets.MNIST(

root="./data", train=True, download=True, transform=transform

)

aidge_dataset = AidgeDataset(

data=trainset, input_shape=[1, 1, 28, 28]

) # wrapper for the dataset

input_shape = aidge_dataset.shape

# Create data provider with batching

batch_size = 256

aidge_dataprovider = aidge_core.DataProvider(

aidge_dataset, batch_size=batch_size, shuffle=False, drop_last=True

)

Model#

We can create a small fully connected model here.

[ ]:

from aidge_learning.confiance.training.utils import init_model_weights

model = aidge_core.sequential(

[

aidge_core.FC(in_channels=np.prod(input_shape), out_channels=100, name="FC_0"),

aidge_core.ReLU(name="Relu_1"),

aidge_core.FC(in_channels=100, out_channels=100, name="FC_2"),

aidge_core.ReLU(name="Relu_3"),

aidge_core.FC(in_channels=100, out_channels=100, name="FC_4"),

aidge_core.ReLU(name="Relu_5"),

aidge_core.FC(in_channels=100, out_channels=10, name="FC_6"),

]

)

# for later reference keep the output node name

out_name = model.get_ordered_nodes()[-1].name()

# Compiling model and initializing weights

model.compile("cpu", aidge_core.dtype.float32, dims=[input_shape])

init_model_weights(

model, ibp_init=True

) # this will initialize the weight with a special distribution better for Interval Bound propagation

Interval model#

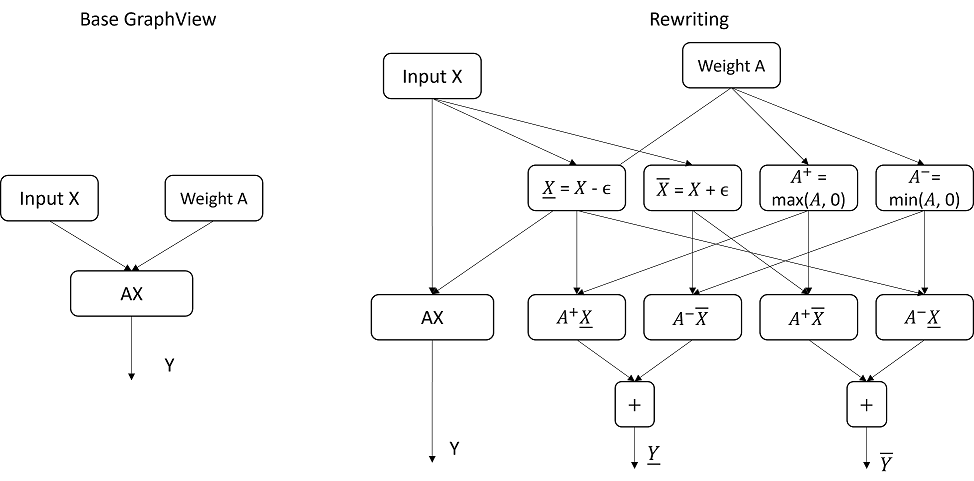

From this model, we now want to build a GraphView that does both classical inference and interval propagation. This is done by rewriting the GraphView by applying interval arithmetic like shown below.

This rewriting is applied to very operator that is doing a multiplication (or matrix multiplication) in the GraphView, as this is not linear for intervals. Note: For now only Conv and FC layers are properly handled.

[ ]:

from aidge_learning.confiance.training.interval_builder import get_interval_network

# This function will build the interval network through interval arithmetic

interval_model = get_interval_network(model.clone())

# Compiling the model and creating Scheduler

interval_model.compile(

"cpu", aidge_core.dtype.float32, dims=[input_shape, input_shape]

) # two inputs

interval_scheduler = aidge_core.SequentialScheduler(interval_model)

# We can visualize the model as a mermaid file, however the addition of two copies of the model makes it hard to read

interval_model.save("interval_model")

tuto_utils.visualize_mmd("interval_model.mmd")

We can see that the GraphView now has 2 inputs: the original input and an epsilon parameter to parametrise the perturbation intensity. It also has 3 outputs: the original model output, a lower bound and an upper bound.

Training the model#

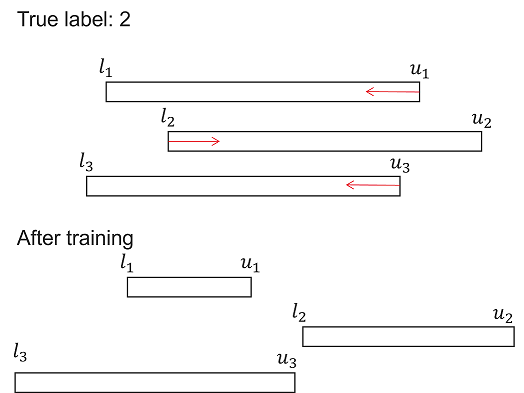

The goal of our robust training will be either to reduce the width of the output interval (upper bound - lower bound) or to make sure that the correct output bounds are above the other bounds. Here we are using MNIST for a classification task so we will use the later method.

To illustrate this, let’s consider a classification problem with only 3 output classes: 1, 2, 3. For each class and given a certain \(\epsilon\), our interval network will provide bounds for each output class probability: \([l_1, u_1], [l_2, u_2], [l_3, u_3]\) for each input sample. Now we can consider that the true label of our sample is class 2. To ensure that our class 2 is always selected even with a perturbation \(\epsilon\) we must thus have \(l_2 > u_1\) and \(l_2 > u_3\). As such the goal of the training will be exactly that: increasing the lower bound of class 2 and diminishing the upper bound of the other.

We will achieve that by using a special cross entropy loss later on. Note: to simply reduce the interval width, we can add a special output to the network computing the width (upper - lower) and using a MSE loss.

The training will consist of two phases:

A warmup period, with \(\epsilon = 0\), to increase the clean accuracy of the model before anything.

A ramp up period where \(\epsilon\) will gradually increase until its maximum value.

The warmup period is necessary to first achieve good performances on the basic model before training the robust model. It can be skipped if we use a pretrained model. In the ramp up period, we gradually increase the \(\epsilon\) to avoid bounds explosion during training which would prevent the model from converging. For this we can use a scheduler so that \(\epsilon\) increases linearly at each epoch.

Here we will use a max \(\epsilon\) value of 0.01 as our goal. This value is heavily dependent on the dataset and when increasing the \(\epsilon\) value it is often necessary to do a longer training with more epochs to keep a slow increase of \(\epsilon\). 5 epochs will be enough for \(\epsilon = 0.01\) but we might need 50 for \(\epsilon = 0.1\).

[ ]:

from aidge_learning.confiance.training.scheduler import LinearScheduler

max_epsilon = 0.01

warmup_epochs = 2

rampup_epochs = 8

eps_scheduler = LinearScheduler(step=max_epsilon / rampup_epochs, max_val=max_epsilon)

We can now start the main training loop

[ ]:

from aidge_learning import step_lr, Adam

# We use Adam optimizer

interval_opt = Adam()

interval_opt.set_learning_rate_scheduler(step_lr(0.001, 10000))

interval_opt.set_parameters(list(aidge_core.producers(interval_model)))

[ ]:

from tqdm.notebook import tqdm

for epoch in range(warmup_epochs + rampup_epochs):

epsilon = eps_scheduler.get_value(epoch - warmup_epochs)

# Progress bar to monitor training

pbar = tqdm(

enumerate(aidge_dataprovider), total=len(aidge_dataprovider), file=sys.stdout

)

phase = "Warmup" if epoch < warmup_epochs else "Robust"

pbar.set_description(

f"Epoch {epoch+1}/{warmup_epochs + rampup_epochs} [{phase}] (ε={epsilon:.6f})"

)

# Iterate over data

for i, (data, label) in pbar:

# Create epsilon tensor

epsilon_tensor = np.full(np.array(data).shape, epsilon, dtype=np.float32)

# Forward pass

interval_scheduler.forward(data=[data, aidge_core.Tensor(epsilon_tensor)])

# Get outputs

pred = interval_model.get_node(out_name).get_operator().get_output(0)

lower = (

interval_model.get_node(f"{out_name}_lower").get_operator().get_output(0)

)

upper = (

interval_model.get_node(f"{out_name}_upper").get_operator().get_output(0)

)

width = upper - lower

acc = aidge_learning.metrics.Accuracy(pred, label, 1)[0]

# Clean CrossEntropy Loss

clean_ce_loss = aidge_learning.loss.CELoss(pred, label)

# Robust CE Loss (based on above explanation)

rob_ce_loss = aidge_learning.loss.RobustCELoss(lower, upper, label)

# Backward and update interval model parameters

interval_scheduler.backward()

interval_opt.update()

interval_opt.reset_grad()

pbar.set_postfix(

{

"clean_loss": float(clean_ce_loss[0]),

"accuracy": (acc / batch_size) * 100,

"rob_loss": float(rob_ce_loss[0]),

"width": np.mean(np.array(width)),

}

)

The model is now trained. We can remove the intervals by extracting the original model and then evaluate its robustness on the test set:

[ ]:

from aidge_learning.confiance.training.utils import evaluate_rob

from aidge_learning.confiance.training.interval_builder import extract_model

# Extracting model

trained_model = extract_model(model, interval_model)

# Get test dataset

transform = transforms.Compose([transforms.ToTensor()])

testset = torchvision.datasets.MNIST(

root="./data", train=False, download=True, transform=transform

)

test_dataset = AidgeDataset(data=testset, input_shape=[1, 1, 28, 28])

test_dataprovider = aidge_core.DataProvider(

test_dataset, batch_size=256, shuffle=False, drop_last=True

)

print("Certified accuracy at ε=0.01:")

evaluate_rob(test_dataprovider, trained_model, 0.01, input_shape)

# We should have around 96% accuracy and 93% robust accuracy

print("Certified accuracy at ε=0.1:")

evaluate_rob(test_dataprovider, trained_model, 0.1, input_shape)

# We did not train the model for ε = 0.1, so the robust accuracy is very low

Running inference with the interval model#

The interval model can also be used directly to run inference and get lower and upper bounds at the same time

[ ]:

from aidge_learning.confiance.training.interval_builder import get_outputs

# Loading an input

digit = np.load("input_digit.npy")

input_tensor = aidge_core.Tensor(digit)

# Chosen perturbation

epsilon = aidge_core.Tensor(np.full(digit.shape, 0.01, dtype=np.float32))

# Run inference

interval_scheduler.forward(data=[input_tensor, epsilon])

out, out_lower, out_upper = get_outputs(model, interval_model)

arg_max_out = np.argmax(out)

print("Aidge prediction =", arg_max_out)

print("Output:", out[arg_max_out])

print("Lower bound:", out_lower[arg_max_out])

print("Upper bound:", out_upper[arg_max_out])

All in one function and more#

You can also find the complete robust training pipeline in the train_interval_model function. For the full documentation on it refer to the readme of aidge_learning.confiance module.

[ ]:

from aidge_learning.confiance.training_interval import train_interval_model

train_interval_model(aidge_dataprovider, model, input_shape, method="robust", eps=0.01)